What does mean squared error tell us?

What does mean squared error tell us?

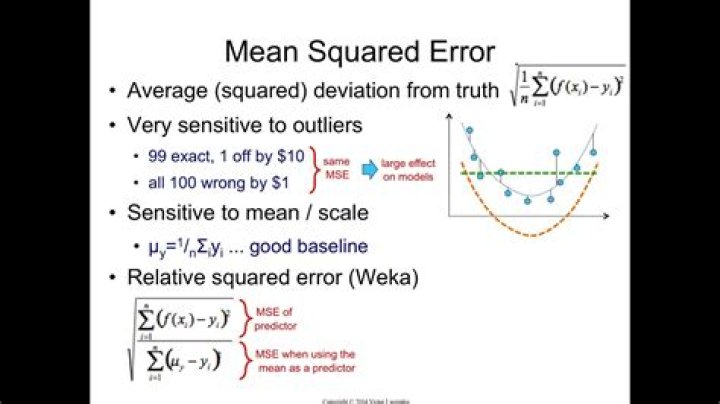

MSE is used to check how close estimates or forecasts are to actual values. Lower the MSE, the closer is forecast to actual. This is used as a model evaluation measure for regression models and the lower value indicates a better fit.

What is error loss function?

In mathematical optimization and decision theory, a loss function or cost function (sometimes also called an error function) is a function that maps an event or values of one or more variables onto a real number intuitively representing some “cost” associated with the event.

How much mean squared error is good?

There are no acceptable limits for MSE except that the lower the MSE the higher the accuracy of prediction as there would be excellent match between the actual and predicted data set. This is as exemplified by improvement in correlation as MSE approaches zero.

What is normal loss function?

Abstract- The Upside-Down Normal Loss Function (UDNLF) is a weighted loss function that has accurately modeled losses in a product engineering context. The function’s scale parameter can be adjusted to account for the actual percentage of material failing to work at specification limits.

How does loss function work?

What’s a loss function? At its core, a loss function is incredibly simple: It’s a method of evaluating how well your algorithm models your dataset. If your predictions are totally off, your loss function will output a higher number. If they’re pretty good, it’ll output a lower number.

Should MSE be high or low?

There is no correct value for MSE. Simply put, the lower the value the better and 0 means the model is perfect.

What value of MSE is good?

There is no correct value for MSE. Simply put, the lower the value the better and 0 means the model is perfect. Since there is no correct answer, the MSE’s basic value is in selecting one prediction model over another.

Why is MSE squared?

The mean squared error (MSE) tells you how close a regression line is to a set of points. It does this by taking the distances from the points to the regression line (these distances are the “errors”) and squaring them. The squaring is necessary to remove any negative signs.

What is a 0 1 loss function?

The 0-1 loss function is an indicator function that returns 1 when the target and output are not equal and zero otherwise: 0-1 Loss: The quadratic loss is a commonly used symmetric loss function.

Why is loss function important?

Loss functions play an important role in any statistical model – they define an objective which the performance of the model is evaluated against and the parameters learned by the model are determined by minimizing a chosen loss function. Loss functions define what a good prediction is and isn’t.