What is Delta log?

What is Delta log?

The Delta Lake transaction log (also known as the DeltaLog ) is an ordered record of every transaction that has ever been performed on a Delta Lake table since its inception.

What is Delta Lake in Databricks?

Delta Lake is an open source storage layer that brings reliability to data lakes. Delta Lake provides ACID transactions, scalable metadata handling, and unifies streaming and batch data processing. Delta Lake runs on top of your existing data lake and is fully compatible with Apache Spark APIs.

What is Delta Lake format?

Delta Lake is an open format storage layer that delivers reliability, security and performance on your data lake — for both streaming and batch operations.

What is CRC file in Delta Lake?

CRC (cyclic redundancy check) file helps spark to optimize its query as it provides key statistics for the data. On the other hand, JSON file captures a lot of info. Let’s read the JSON file and list the columns info then we see there are 4 columns named add, commitInfo, metaData, and protocol.

How do delta tables work?

It acts as a middle layer between Spark runtime and cloud storage. When you store data as delta table by default data is stored as parquet file in your cloud storage. Delta files are sequentially increasing named JSON files and together make up the log of all changes that have occurred to a table.

What is spark Delta Lake?

Delta Lake is a technology that was developed by the same developers as Apache Spark. It’s designed to bring reliability to your data lakes and provided ACID transactions, scalable metadata handling and unifies streaming and batch data processing.

Why is Delta Lake required?

What is Delta format in Databricks?

Delta Lake is a data format based on Apache Parquet. You can use Delta Lake format through notebooks and applications executed in Databricks with various APIs (Python, Scala, SQL etc.) and also with Databricks SQL. As said above, Delta Lake is made of many components: Parquet data files organized or not as partitions.

Is Delta Lake parquet?

Delta Lake uses versioned Parquet files to store your data in your cloud storage.

Why is Delta Lake needed?

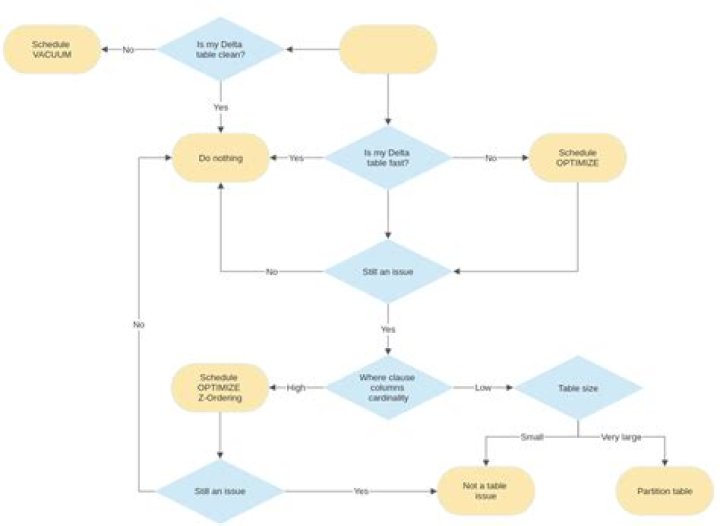

Delta lake provides snapshot isolation which helps concurrent read/write operations and enables efficient insert, update, deletes, and rollback capabilities. It allows background file optimization through compaction and z-order partitioning achieving better performance improvements.

What is Databricks photon?

Photon is a new execution engine on the Databricks Lakehouse platform that provides extremely fast query performance at low cost for SQL workloads, directly on your data lake. With Photon, most analytics workloads can meet or exceed data warehouse performance without actually moving any data into a data warehouse.

What is Dbfs Databricks?

Databricks File System (DBFS) is a distributed file system mounted into a Databricks workspace and available on Databricks clusters. Allows you to mount storage objects so that you can seamlessly access data without requiring credentials.