What is direct-mapped cache in computer architecture?

What is direct-mapped cache in computer architecture?

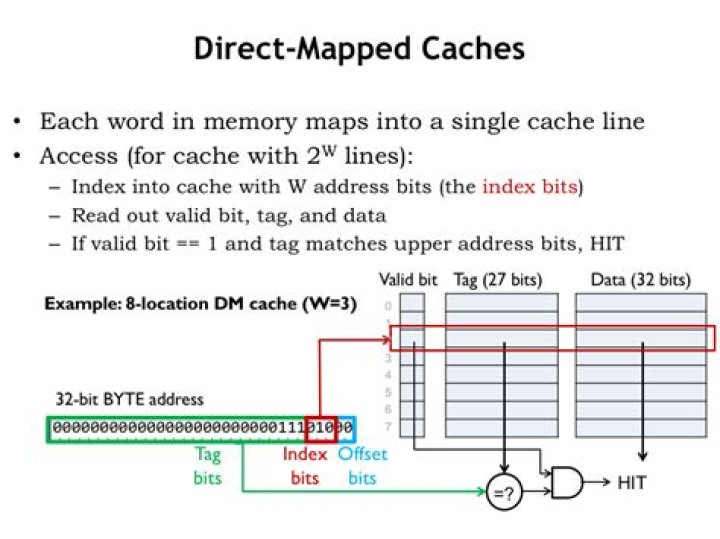

In a direct-mapped cache each addressed location in main memory maps to a single location in cache memory. Since main memory is much larger than cache memory, there are many addresses in main memory that map to the same single location in cache memory.

What are the three fields in a direct-mapped cache address?

6.4 Cache Memory In set associative cache mapping, a memory reference is divided into three fields: tag, set, and word, as shown below. As with direct-mapped cache, the word field chooses the word within the cache block, and the tag field uniquely identifies the memory address.

What are the three fields in a direct-mapped cache address how are they used to access a word located in cache?

The fields are Tag, Set and Word. The Tag identifies a block of main memory. The Set specifies one of the 2^s blocks of main memory. The word is what is to be placed in the main memory.

How does direct cache mapping work?

Direct mapped cache employs direct cache mapping technique. After CPU generates a memory request, The line number field of the address is used to access the particular line of the cache. The tag field of the CPU address is then compared with the tag of the line.

How direct mapping is different from set associative?

In a cache system, direct mapping maps each block of main memory into only one possible cache line. In set-associative mapping, the cache is divided into a number of sets of cache lines; each main memory block can be mapped into any line in a particular set.

What are the difference between direct mapping associative mapping and set associative mapping?

Why is a direct-mapped cache faster than a fully associative cache?

These are two different ways of organizing a cache (another one would be n-way set associative, which combines both, and most often used in real world CPU). Direct-Mapped Cache is simplier (requires just one comparator and one multiplexer), as a result is cheaper and works faster.

What are the types of cache memory in computer architecture?

There are three general cache levels:

- L1 cache, or primary cache, is extremely fast but relatively small, and is usually embedded in the processor chip as CPU cache.

- L2 cache, or secondary cache, is often more capacious than L1.

- Level 3 (L3) cache is specialized memory developed to improve the performance of L1 and L2.

What are the ways the cache can be mapped?

There are three different types of mapping used for the purpose of cache memory which are as follows: Direct mapping, Associative mapping, and Set-Associative mapping.

What is the limitation of direct mapped cache?

Disadvantage of direct mapping: 1. Each block of main memory maps to a fixed location in the cache; therefore, if two different blocks map to the same location in cache and they are continually referenced, the two blocks will be continually swapped in and out (known as thrashing).

What are the advantages and disadvantage of using direct mapping?

Question: A major advantage of direct mapped cache is its simplicity and ease of implementation. The main disadvantage of direct mapped cache is: A. it is more expensive than fully associative and set associative mapping B. it has a greater access time than any other method C.